预测分析优化仓储中心劳动力效率

预测需求波动以优化人员配置

了解需求预测的重要性

准确预测需求波动对于希望优化人员配置的企业至关重要。通过预测高需求时期

优化任务分配和路由以提高生产力

任务优先级的预测建模

预测分析通过分析历史数据,识别模式,从而在优化任务分配中发挥关键作用。

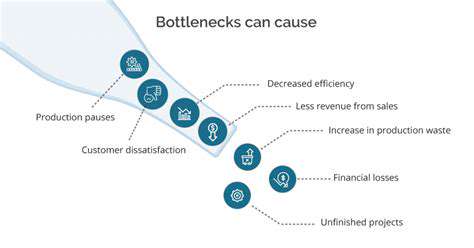

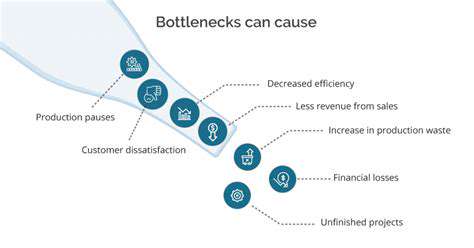

预测和预防瓶颈,实现精益运营

More about 预测分析优化仓储中心劳动力效率

准确预测需求波动对于希望优化人员配置的企业至关重要。通过预测高需求时期

预测分析通过分析历史数据,识别模式,从而在优化任务分配中发挥关键作用。