Digital twin for simulating supply chain resilience against cybersecurity threats

Simulating Cyberattacks and Evaluating Resilience

Understanding the Role of Simulation in Cyberattack Preparedness

Simulating cyberattacks is crucial for evaluating the resilience of digital systems, particularly in supply chains. By replicating real-world threats in a controlled environment, organizations can identify vulnerabilities and weaknesses before they are exploited by malicious actors. This proactive approach allows for the development and testing of defensive strategies, ultimately strengthening the overall security posture. The insights gained from these simulations are invaluable for adjusting security protocols and procedures to better address potential threats. This process also allows for better risk management, as vulnerabilities can be identified in advance of an attack.

A key component of effective simulation is the accurate representation of real-world attack vectors. This includes replicating the sophistication of modern cyber threats, considering both known and emerging attack techniques. Simulations should also encompass various threat scenarios, ranging from simple denial-of-service attacks to more complex and targeted intrusions. By testing against a diverse range of attack vectors, organizations can gain a more comprehensive understanding of their system's vulnerabilities and refine their defense mechanisms accordingly. This approach ensures that the simulated environment mirrors the complexity of real-world attacks, allowing for more robust and effective security assessments.

Developing a Digital Twin for Supply Chain Security

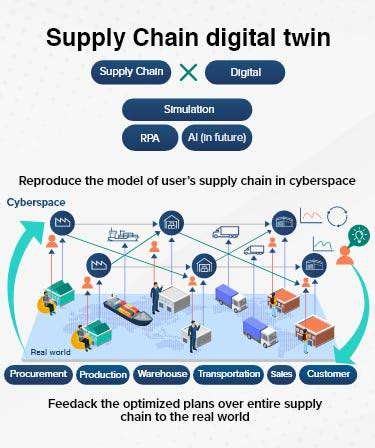

A digital twin, a virtual representation of a physical system, offers a powerful platform for simulating cyberattacks on supply chains. By creating a digital replica of the entire supply chain, from raw materials sourcing to final product delivery, organizations can identify potential entry points for cyber threats and evaluate the impact of an attack on different stages of the process. This detailed representation allows for a comprehensive analysis of the supply chain's vulnerabilities, enabling proactive security measures to be implemented.

A digital twin for supply chain security allows for the modeling of various operational processes, including logistics, inventory management, and communication networks. This granular level of detail is essential for simulating cyberattacks that target these specific areas. By accurately replicating these processes within the digital twin, organizations can gain valuable insights into how a cyberattack might disrupt the supply chain and identify potential choke points or weak links. This modeling can be used to test different response strategies and defensive measures, ultimately leading to a more resilient and secure supply chain.

Evaluating and Improving Resilience Through Simulated Attacks

Evaluating resilience through simulated cyberattacks is a crucial step in fortifying a supply chain's defenses. By conducting controlled tests, organizations can assess the effectiveness of their security protocols and identify areas where improvements are needed. This iterative process of simulation and evaluation allows for continuous improvement and adaptation to emerging threats. This approach ensures that the security measures are regularly updated, enhancing the overall resilience of the supply chain. Critically, this also includes evaluating the response time and effectiveness of existing security teams and processes.

The analysis of simulated attacks provides valuable data for understanding the potential impact of different cyber threats. This includes quantifying financial losses, operational disruptions, and reputational damage. By measuring the outcomes of these simulations, organizations can prioritize vulnerabilities and allocate resources effectively to mitigate risks. This data-driven approach ensures that security investments are directed towards the most critical areas, maximizing the return on security initiatives. A crucial aspect of this evaluation is also measuring the effectiveness of security tools and personnel in responding to simulated attacks.

Thorough post-simulation analysis is essential. This includes reviewing the attack scenarios, identifying vulnerabilities, and evaluating the effectiveness of implemented countermeasures. By analyzing the results of simulated attacks, organizations can gain actionable insights to strengthen their defenses and improve their overall resilience against cyber threats. This ongoing process of refinement and improvement ensures that defenses are continuously updated and remain effective against emerging threats. This analysis should also include evaluating the effectiveness of incident response plans during the simulated attack.

Predictive Modeling and Proactive Security Measures

Understanding Predictive Modeling

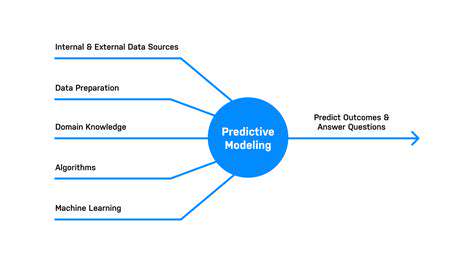

Predictive modeling is a powerful technique that uses historical data to build mathematical models that can predict future outcomes. These models analyze patterns and relationships within the data to identify trends and forecast future values. This process is crucial for making informed decisions across various industries, from finance and healthcare to marketing and manufacturing. By understanding past behaviors, predictive models can anticipate future events, enabling proactive measures to be taken.

A key aspect of predictive modeling is the selection of relevant variables. Identifying the factors that most significantly influence the outcome being predicted is essential for building accurate and reliable models. This involves careful consideration of the data available and understanding the potential relationships between different variables.

Proactive Measures and Their Impact

Proactive measures, driven by the insights from predictive models, allow organizations to anticipate potential problems and opportunities. This proactive approach often leads to significant improvements in efficiency and effectiveness. Instead of reacting to events as they occur, organizations can take steps to mitigate risks and capitalize on emerging trends, thereby improving overall performance.

Proactive measures translate into reduced costs and increased profitability. By anticipating issues before they arise, organizations can avoid costly disruptions and rework. They can also allocate resources more effectively, leading to higher returns on investment.

Types of Predictive Modeling Techniques

Various techniques are employed in predictive modeling, each suited to different types of data and prediction tasks. Regression analysis, for example, is used to model the relationship between a dependent variable and one or more independent variables. Classification techniques, on the other hand, are used to categorize data points into predefined classes. These diverse methods allow for the creation of models tailored to specific needs and datasets.

Decision trees, support vector machines, and neural networks are other common techniques. Each of these techniques has its strengths and weaknesses, and the selection of the appropriate technique depends on the complexity of the problem and the characteristics of the data.

Applications of Predictive Modeling

Predictive modeling finds applications in a wide range of industries. In finance, it can be used to assess credit risk and predict stock prices. In healthcare, it can be used to identify patients at risk of developing certain diseases and predict treatment outcomes. In marketing, it can be used to target customers more effectively and predict sales.

These diverse applications highlight the universality of predictive modeling's benefits. From personalized marketing campaigns to early disease detection, predictive models empower organizations to make smarter decisions and achieve better outcomes.

Challenges and Considerations

Despite the numerous benefits, predictive modeling is not without challenges. Data quality and quantity are crucial factors that can significantly impact model accuracy. If the data used to train the model is incomplete, inaccurate, or biased, the predictions generated will likely be unreliable. Careful data preparation and validation steps are therefore essential.

Data privacy and security concerns are also increasingly important considerations. As predictive models often rely on sensitive personal data, organizations must implement robust security measures to protect this information and comply with relevant regulations. Ethical considerations related to the use of predictive models must also be carefully considered.

Implementing and Managing the Digital Twin Solution

Understanding the Core Concepts of Digital Twins

Digital twins are virtual representations of physical assets, processes, or systems. They leverage data from various sources to create a dynamic model that mirrors the real-world counterpart. This allows for detailed analysis, simulation, and optimization without impacting the actual operation. Understanding the fundamental concepts of digital twins is crucial for successful implementation and management, as it forms the basis for all subsequent steps.

This involves recognizing the interconnectedness of data streams, the importance of accurate data representation, and the capability of the digital twin to reflect real-time changes in the physical system. It necessitates a deep understanding of the system being modeled, including its components, interactions, and operational parameters.

Data Acquisition and Integration Strategies

A critical aspect of implementing a digital twin solution is establishing robust data acquisition and integration strategies. This encompasses identifying the relevant data sources, selecting appropriate data formats, and developing secure methods for data transfer and storage. The quality and consistency of the data are paramount, as inaccuracies can lead to flawed simulations and unreliable insights. Data cleaning, validation, and transformation processes are essential steps to ensure data integrity.

Model Development and Validation

Developing a precise and reliable digital twin model requires careful consideration of the chosen modeling techniques and the accuracy of the input data. Model validation is a crucial step to ensure that the digital twin accurately reflects the real-world system. This involves comparing the model's predictions with actual system performance data. Regular validation and recalibration are essential to maintain the model's accuracy and relevance as the system evolves.

Simulation and Analysis Capabilities

A significant advantage of digital twins is their ability to simulate various scenarios and conditions. This allows for testing different strategies and optimizations without the risks and costs associated with real-world experimentation. Advanced simulation tools and analysis techniques can be utilized to explore the potential impacts of various changes, including design modifications, operational adjustments, and environmental factors. This predictive capability is essential for optimizing performance and proactively addressing potential issues.

Implementation and Deployment Strategies

The successful implementation of a digital twin solution requires a well-defined deployment strategy. This includes careful planning, phased rollout, and ongoing monitoring. Choosing the right platform and infrastructure is crucial for scalability and maintainability. Integration with existing systems and workflows should be considered to minimize disruption and maximize efficiency.

Security and Data Privacy Considerations

As digital twins rely heavily on data, security and data privacy are paramount concerns. Robust security measures must be implemented to protect sensitive information and prevent unauthorized access. Compliance with relevant data privacy regulations is critical. Establishing clear access controls and data encryption protocols will help to mitigate risks and maintain the integrity of the digital twin.

Maintenance and Continuous Improvement

Digital twin solutions are not static; they require ongoing maintenance and continuous improvement. Regular updates and recalibrations are necessary to ensure the model remains accurate and relevant. Continuous monitoring of the real-world system and feedback loops are essential to refining the digital twin model and improving its predictive capabilities. This iterative process allows for adaptation to changing conditions and ensures the digital twin remains a valuable tool for ongoing optimization and decision-making.

- How to keep your dog’s coat shiny and healthy

- Why regular grooming is essential for your dog’s health

- The best collars for dogs with sensitive skin

- How to prevent sunburn on your dog’s nose and ears

- How to choose the best dog jacket for winter

- Robotics for automated picking from chaotic bins

- Building Trust: Supply Chain Transparency for Consumer Confidence

- Building a Data Driven Culture for Supply Chain Visibility

- Cybersecurity Threats to Your Supply Chain Technology Stack

- AI & Data Analytics: Driving Sustainability in Supply Chains

- Supply Chain Visibility: A Key to Sustainable Logistics

- Robotics for automated kitting and assembly